Training evaluation models are the difference between guessing client progress and proving progress with real data. Many experienced coaches still rely on scattered notes, memory, and intuition to judge results. That approach works until you try scaling programs, defending coaching ROI, or running structured team coaching engagements.

Most coaches today are trying to answer one question, “Are my sessions actually creating measurable change for clients?” Without structure, goal tracking becomes messy, follow-ups slip, and no-shows increase despite automated reminders and scheduling tools.

This is where training evaluation models give coaches a repeatable way to measure progress, refine programs, and demonstrate real impact. As executive coach Marshall Goldsmith often reminds leaders, “What gets measured gets improved.”

In this article, you’ll learn about practical decision frameworks, real coaching use cases, and when to apply different models to strengthen client outcomes and scale delivery without losing quality.

Key Takeaways

- Choosing the right training evaluation model helps coaches measure program impact and improve client outcomes systematically.

- Tracking metrics like reaction, learning, behavior, and results ensures insights are actionable.

- Using structured models reduces admin chaos, improves follow-ups, and helps automate check-ins, reminders, and goal tracking.

- Comparing manual versus automated tracking highlights efficiency gains and identifies areas where coaching ROI can be proven.

- When goals, actions, stakeholder feedback, and progress reports live inside one platform like Simply.Coach, coaches can measure outcomes without balancing spreadsheets or scattered notes.

What Are Training Evaluation Models?

Training evaluation models are structured frameworks used to assess whether a learning or development program produces measurable results. They help practitioners move beyond subjective impressions by evaluating what participants learned, how their behavior changed, and whether those changes led to meaningful outcomes.

In traditional training environments, evaluation is relatively straightforward because programs usually deliver standardized skills to groups. Outcomes can often be measured through knowledge tests, skill assessments, or performance improvements after training.

However, evaluating coaching and leadership development programs is more complex. Coaching outcomes are harder to measure because they involve:

- Individualized goals that differ from client to client.

- Delayed behavior change that emerges gradually over time.

- Sponsor influence where managers or HR leaders define success alongside the client.

- Context dependency, where workplace culture and team dynamics affect outcomes.

- Attribution challenges, since improvements may result from multiple factors beyond coaching.

Training evaluation models provide a structured way to navigate these complexities, helping coaches and development professionals systematically track progress, behavior change, and long-term impact.

As James D. Kirkpatrick notes, “Evaluation is not an afterthought to training, but rather is meant to be integrated into the entire learning and development process.” In coaching, this means tracking client progress, measuring skill adoption, and using insights to drive consistent, long-term transformation.

Also read: Competency Models for Coaches: How to Align, Measure, and Drive Impact

Once the concept is clear, the next step is understanding which evaluation frameworks coaches commonly use.

10 Best Training Evaluation Models for Coaching Professionals

Several evaluation frameworks exist, but a few models consistently work well for coaches who want measurable outcomes without heavy administrative overhead. Below are some of the most widely used training evaluation models that help coaches track learning and behavior change.

| Training Evaluation Model | Best For | Benefits | Limitations |

| Kirkpatrick Model | Leadership development cohorts and corporate L&D programs | Links learning outcomes to behavioral transfer and business results | Difficult to isolate training impact from external variables |

| CIRO Model | Leadership diagnostics and coaching needs analysis | Evaluates organizational context before measuring outcomes | Requires intensive pre-program diagnostic assessment |

| Phillips ROI Model | Executive coaching, sales enablement, and revenue training | Quantifies financial ROI and program cost–benefit | ROI attribution requires complex data modeling |

| Brinkerhoff Model | Cohort coaching and enterprise transformation initiatives | Identifies success and failure patterns in program outcomes | Focuses primarily on extreme performance cases |

| Kaufman’s Model | Enterprise leadership academies and large L&D ecosystems | Evaluates program inputs, outputs, and societal impact | Multi-level framework increases implementation complexity |

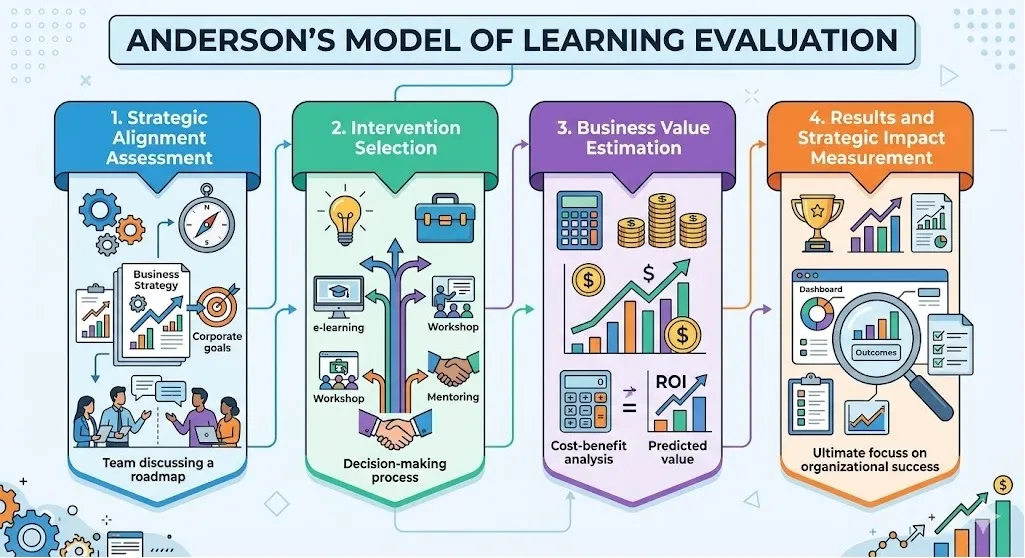

| Anderson’s Model | Strategy-aligned coaching initiatives and leadership pipelines | Aligns coaching outcomes with strategic business objectives | Requires robust organizational performance data |

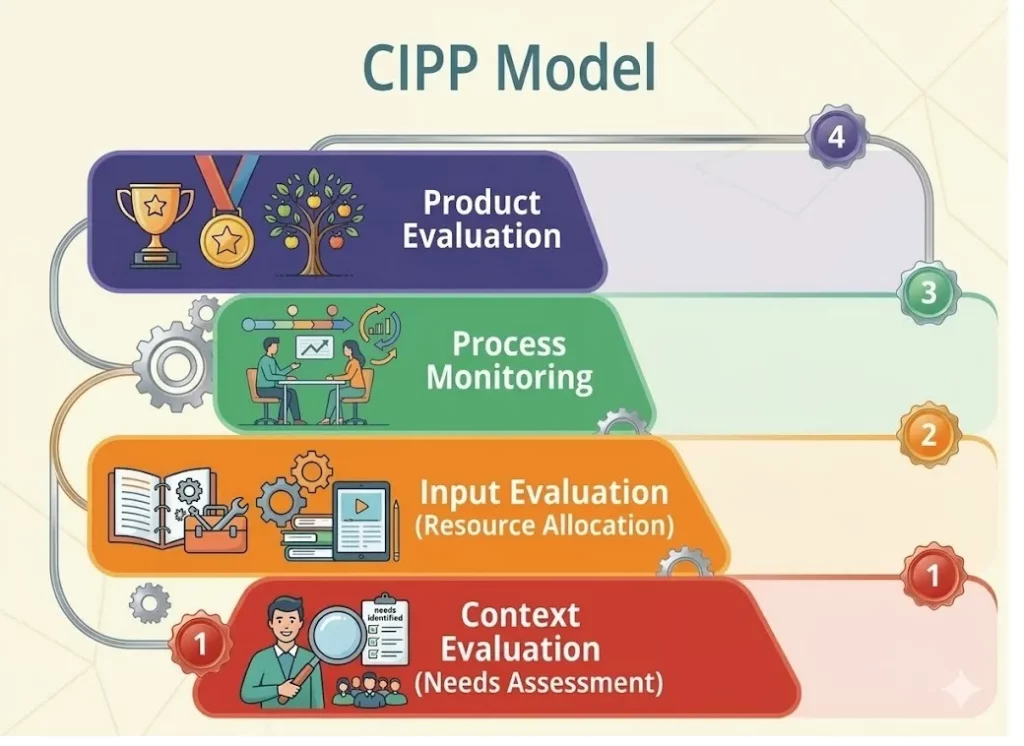

| CIPP Model | Multi-phase leadership or capability development programs | Evaluates context, design quality, and implementation delivery | Data collection and evaluation cycles are time-intensive |

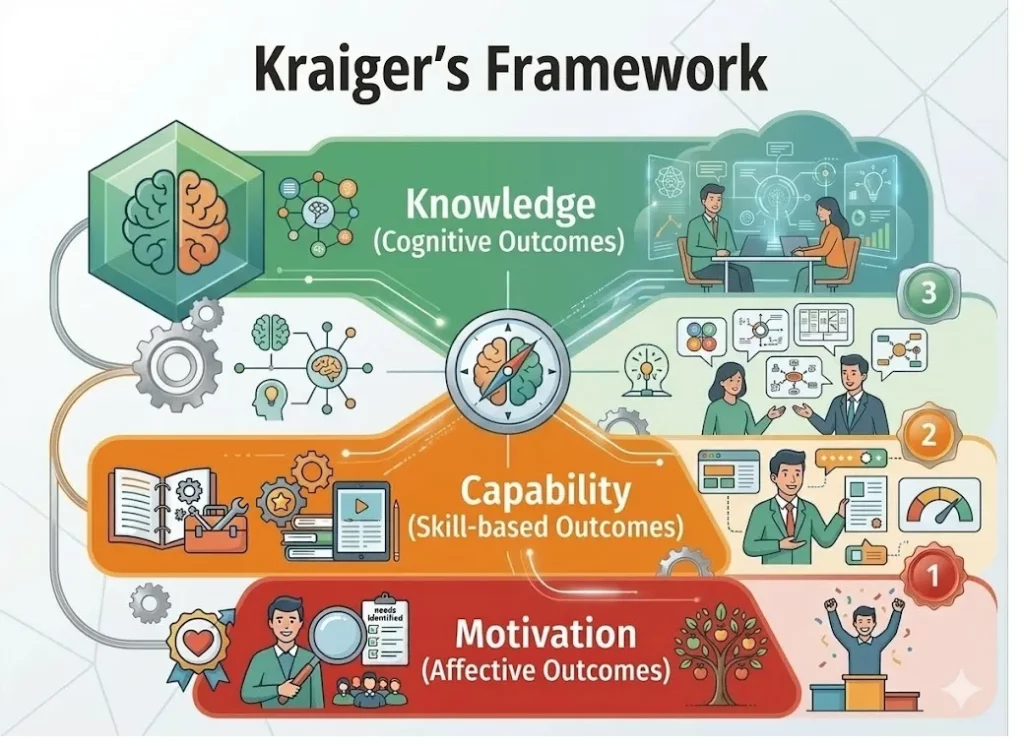

| Kraiger’s Framework | Competency development and behavioral skills coaching | Separates learning into cognitive, skill-based, and motivational outcomes | Does not measure organizational or financial impact |

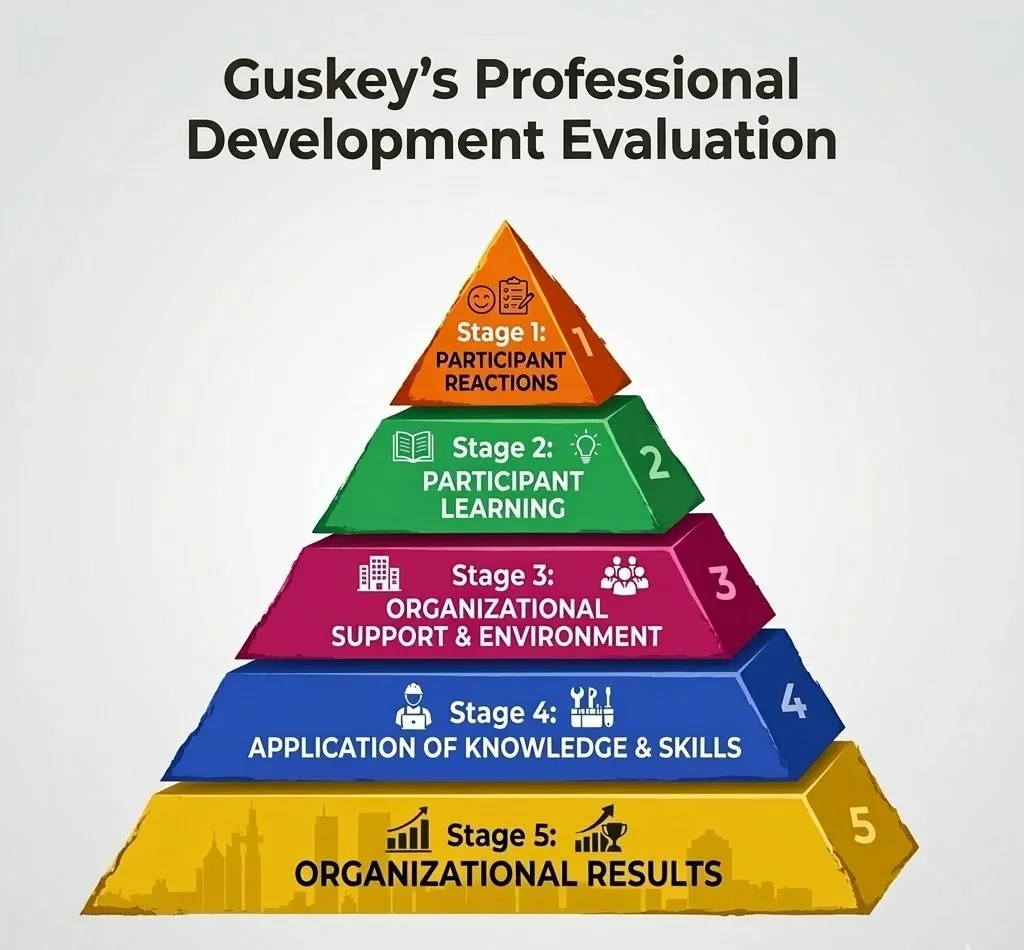

| Guskey’s Model | Organizational coaching and professional development systems | Links participant learning to team and organizational performance | Requires environmental and organizational metrics |

| ADDIE Model | Designing structured coaching curricula and training journeys | Provides systematic instructional design and program iteration | Emphasizes design over measurable impact evaluation |

1. Kirkpatrick Model

The Kirkpatrick Model is one of the most widely used training evaluation models for measuring learning and behavioral impact in coaching and professional development. Developed by Donald Kirkpatrick, it evaluates coaching outcomes across four levels, from session experience to measurable real-world results.

For leadership, executive, and career coaches working with founders, senior managers, or high-performing professionals, it connects coaching insights to observable behavioral change. Instead of relying on satisfaction alone, the model helps track habit adoption, leadership effectiveness, and decision-making improvements.

Framework breakdown:

- Reaction: Measures how clients respond immediately after sessions. Coaches typically track session engagement scores, satisfaction ratings, and perceived relevance. Low reaction scores often signal issues with session structure, pacing, or clarity, factors that can eventually lead to disengagement or no-shows.

- Learning: Evaluates what clients actually absorbed during coaching. This includes frameworks learned, mindset shifts, or decision-making models introduced in sessions. Coaches validate learning through reflection prompts, quick assessments, or structured pre-session check-ins.

- Behavior: Tracks whether insights turn into real action between sessions. This includes habit adoption, communication improvements, or leadership behavior changes. Metrics often include goal completion rates, habit adherence, and feedback from peers, managers, or accountability partners.

- Results: Measures the final outcomes generated through coaching engagement. This may include promotion readiness, team performance improvement, or revenue growth.

Why it works:

- Moves coaching beyond satisfaction surveys by linking session insights to behavioral and performance outcomes.

- Works well for executive and leadership coaching where clients must demonstrate measurable change to sponsors or HR leaders.

- Supports ROI conversations for corporate coaching programs by mapping behavior shifts to business metrics.

- Creates structured accountability for clients by tracking actions and results between sessions.

When to use this model?

- Use this model when coaching founders or managers, and you need to track how leadership behaviors like delegation, decision-making, or communication improve between sessions.

- It is particularly helpful when organizations funding executive coaching want clear, measurable outcomes from leadership development programs.

- Coaches often apply this model when running structured multi-session coaching engagements that require consistent progress tracking.

- It also works well when linking coaching insights to outcomes like promotion readiness, leadership effectiveness, or improved team productivity.

Also read: Coaching Tracking Tools: How Coaches Measure Progress and Impact

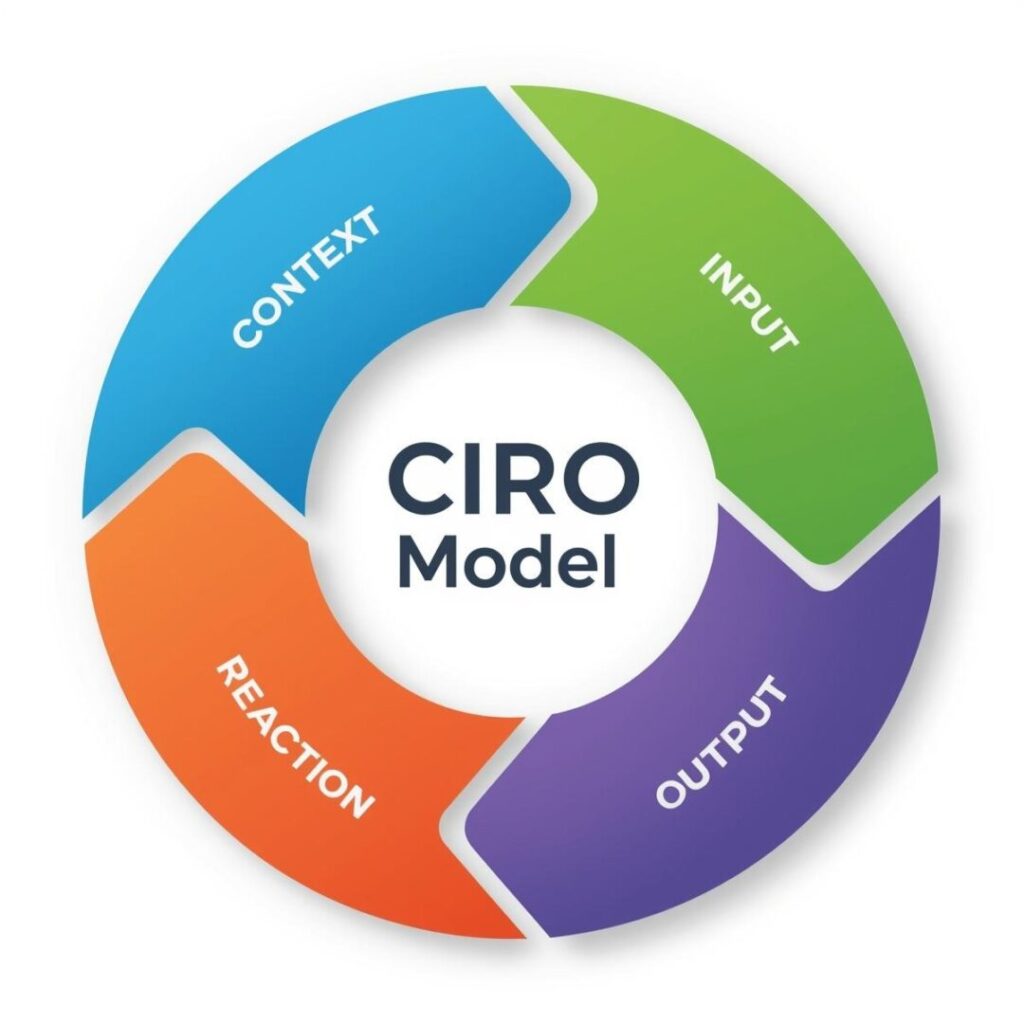

2. CIRO Model

The CIRO Model is designed to diagnose coaching needs before evaluating results, something many training evaluation models skip. Developed by Peter Warr and colleagues, it examines four stages: Context, Input, Reaction, and Outcome.

For executive coaches working with leadership teams, this model prevents launching programs without understanding the real challenge.

Framework breakdown:

- Context: Coaches analyze leadership gaps, team dynamics, decision bottlenecks, or performance barriers. This stage often includes intake assessments, leadership interviews, and alignment discussions before sessions begin.

- Input: Coaches review frameworks, session cadence, accountability systems, and progress tracking methods. The goal is to confirm that the program structure realistically supports the outcomes the organization expects.

- Reaction: Measures how participants experience the coaching engagement. Coaches track engagement levels, perceived relevance, and clarity of insights during sessions. Structured feedback surveys or automated check-ins help detect early disengagement or program friction.

- Outcome: This can include leadership performance improvement, team collaboration gains, or stronger decision-making. Outcome data typically comes from goal completion rates, leadership feedback, and long-term behavior indicators.

Why it works:

- Prevents solving the wrong problem by diagnosing leadership or organizational challenges before designing coaching interventions.

- Useful for executive and organizational coaching where cultural, structural, or leadership alignment issues affect results.

- Strengthens program design by ensuring coaching frameworks match real organizational needs.

- Improves credibility with leadership teams by linking coaching interventions directly to diagnosed performance gaps.

When to use this model?

- This model is useful when diagnosing leadership or team challenges before launching a coaching program, ensuring the real problem is identified first.

- Executive coaches often apply it when advising organizations on leadership development or culture transformation initiatives.

- It works well when designing structured coaching programs such as founder mentoring tracks or leadership accelerators.

- The model is also valuable when evaluating whether the coaching structure, accountability system, and session design truly support the desired outcomes.

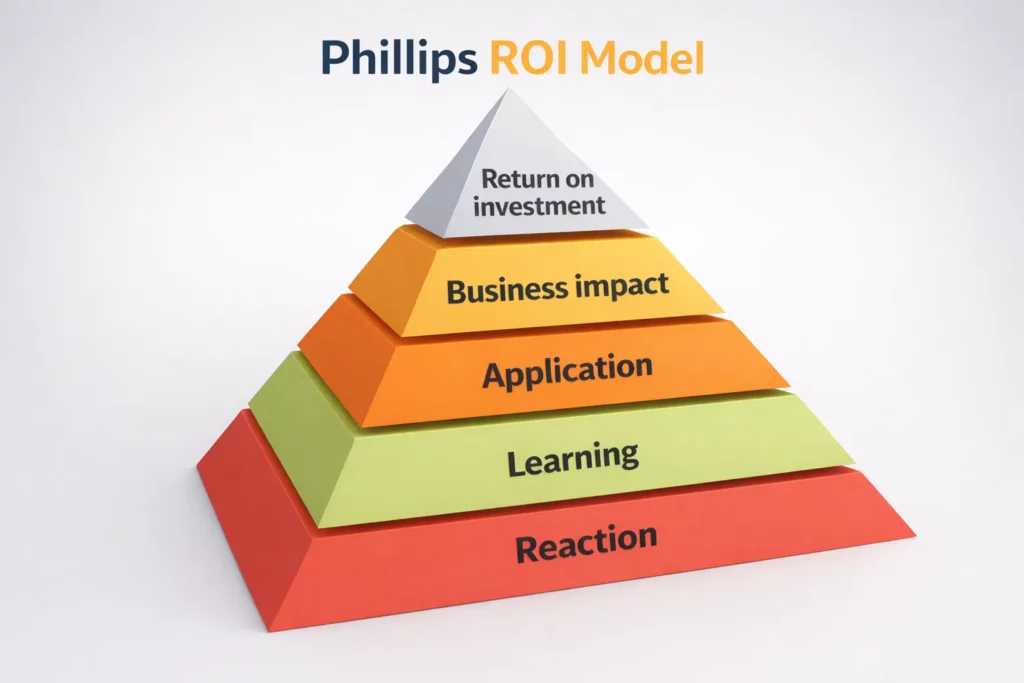

3. Phillips ROI Model

The Phillips ROI model extends traditional training evaluation models by calculating the financial return of coaching programs. Developed by Jack J. Phillips, it builds on the Kirkpatrick framework and adds a fifth level that measures economic value.

For executive coaches working with founders or leadership teams, this model transforms coaching outcomes into measurable business impact. Instead of reporting subjective transformation, coaches link coaching interventions to productivity gains, revenue growth, or retention improvements.

Framework breakdown:

- Reaction and planned action: Measures client satisfaction and their commitment to applying session insights. Coaches track session relevance, clarity of frameworks, and engagement levels.

Planned action metrics often include written commitments and next-session implementation goals. - Learning: Evaluates what clients understood and retained during coaching sessions. This may include leadership frameworks, decision-making models, or mindset shifts.

Coaches validate learning through reflection prompts, quick assessments, or discussion reviews. - Application and implementation: Tracks whether clients apply coaching strategies in real situations. Metrics include habit adoption, execution of action plans, and leadership communication improvements.

Progress is monitored through weekly check-ins, accountability reviews, and goal tracking. - Business impact: Measures how coaching influences measurable performance outcomes. This may include productivity gains, improved team performance, or revenue growth.

Data typically comes from performance dashboards, leadership feedback, or engagement surveys. - Return on investment (ROI): Calculates the financial value generated by coaching compared to program cost. Coaches estimate measurable benefits and subtract the cost of the intervention.

The final ROI metric shows whether the coaching engagement produced a positive economic return.

Why it works:

- Provides financial justification for coaching, critical in corporate or leadership development programs.

- Strengthens credibility for executive coaches by linking behavior change to measurable business outcomes.

- Supports premium coaching engagements where sponsors require proof of performance improvement.

- Encourages outcome-focused coaching design by aligning sessions with measurable business metrics.

When to use this model?

- Organizations typically use this model when stakeholders expect financial justification for executive or leadership coaching investments.

- It is highly relevant when coaching programs influence measurable metrics such as revenue growth, productivity improvements, or employee retention.

- Coaches also apply this framework when positioning premium coaching engagements that require clear performance-based outcomes.

- It works best in performance-driven environments such as sales leadership coaching or founder growth programs, where results can be quantified.

Also read: 3 Effective Models to Measure Training Outcomes Through Calculating ROI

4. Brinkerhoff Model (Success Case Method)

The Brinkerhoff Model, developed by Robert O. Brinkerhoff, takes a refreshingly practical approach to coaching evaluation. Instead of averaging everyone’s results, it focuses on two groups: the clients who achieved exceptional progress and those who struggled to implement the work.

For executive coaches running leadership cohorts, founder intensives, or structured transformation programs, this method quickly reveals what actually drives meaningful results. Often, the difference isn’t talent or motivation; it’s how consistently clients follow through on action plans, track progress, and stay accountable between sessions.

Framework breakdown:

- Identify the extremes: The coach identifies participants with the strongest outcomes and the weakest results. In leadership or founder programs, this might involve tracking goal completion rates, execution of action plans, leadership behavior changes, or engagement with program tools.

- Conduct In-depth interviews: Next, the coach interviews both high-performing and low-performing participants. For example, a founder who successfully restructured their leadership team may describe how they implemented weekly decision frameworks, while another participant may reveal they never turned session insights into structured action plans.

- Analyze and document findings: Interview insights are analyzed to identify success patterns. Coaches often discover that strong outcomes come from consistent follow-ups, clear goal-tracking dashboards, and strong accountability routines, while weaker outcomes often stem from scattered notes, unclear priorities, or inconsistent engagement with the program.

- Communicate and recommend improvements: Finally, the coach translates these findings into program improvements, such as stronger accountability checkpoints, clearer action-planning frameworks, or more structured progress tracking between sessions.

Why it works:

- Reveals what actually drives transformation in cohort programs. Particularly useful for coaches running leadership accelerators or founder development cohorts where outcomes vary widely.

- Turns successful client behavior into repeatable program design. For example, identifying that clients who used weekly decision frameworks or accountability dashboards achieved faster progress.

- Helps refine coaching programs after multiple cycles. Coaches can improve future cohorts by removing friction points that prevented implementation in earlier groups.

- Provides practical insights instead of theoretical evaluation. The model focuses on real execution patterns between sessions.

When to use this model?

- This model is ideal when evaluating leadership cohorts or group coaching programs where participant outcomes vary significantly.

- Coaches often use it when refining coaching frameworks after running multiple program cycles.

- It becomes valuable when trying to understand why some clients successfully implement coaching insights while others struggle.

- The method also helps when improving program design before scaling coaching delivery to larger groups or cohorts.

Also read: The GROW Model Template: A Proven Framework for Coaching Success

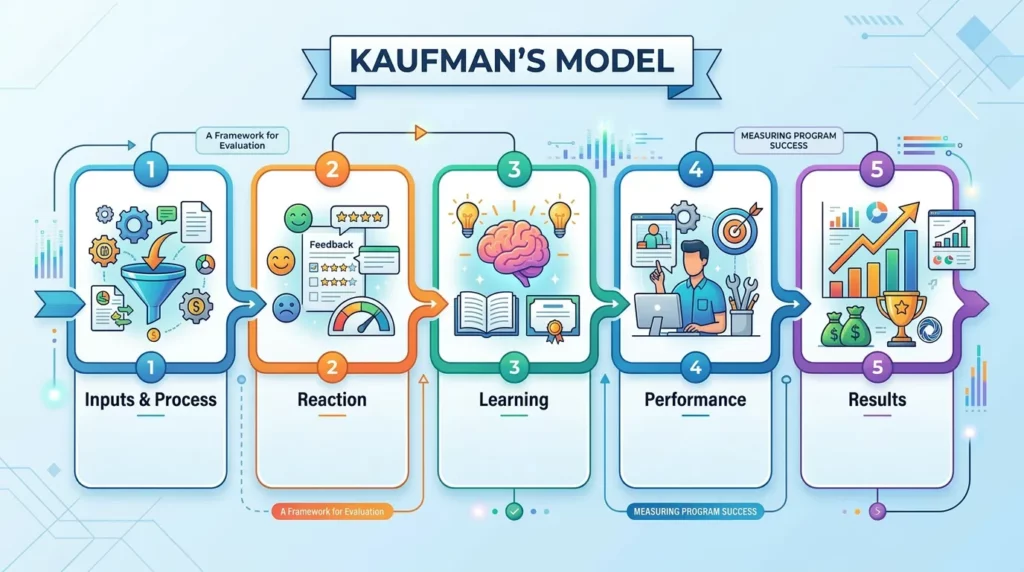

5. Kaufman’s Model

The Kaufman Model, developed by Roger Kaufman, expands traditional evaluation frameworks by examining whether the coaching system itself is designed to produce results. Many coaches track whether clients enjoyed the session. Far fewer evaluate whether their program structure, goal systems, accountability routines, and follow-up mechanisms actually support consistent behavior change.

For executive coaches running multi-week leadership intensives, transformation programs, or founder coaching engagements, this distinction matters. If the structure is flawed, unclear, or has scattered progress tracking or weak accountability, clients may gain insights but fail to translate them into sustained action.

Framework breakdown:

- Inputs & Process: This level evaluates how the coaching program operates day-to-day. It examines elements like session design, accountability routines, progress dashboards, and follow-up systems. Weak program inputs often show up as unclear action plans or inconsistent progress tracking.

- Reaction: Reaction captures how clients experience the sessions. Coaches track engagement levels, perceived usefulness of discussions, and clarity of next steps. In leadership coaching, early feedback often reveals if sessions feel actionable or overly theoretical.

- Learning: This stage measures whether clients actually internalize the frameworks discussed. For example, a founder may learn a strategic prioritization model, while a manager may absorb structured feedback techniques for team performance conversations.

- Performance: Performance tracks whether learning translates into behavior. Coaches monitor goal completion rates, habit adoption, leadership decision patterns, and implementation of new communication practices between sessions.

- Results: Finally, the framework measures the long-term outcomes created by coaching. This could include stronger leadership effectiveness, improved team productivity, successful role transitions, or measurable business performance improvements.

Why it works:

- Diagnoses whether results depend on program design or client effort. Especially valuable for coaches running structured transformation programs or leadership cohorts.

- Improves consistency in multi-week coaching programs. By strengthening goal systems, accountability routines, and progress tracking frameworks.

- Helps scale coaching delivery without losing impact. Coaches can refine program structure before expanding cohorts, leadership intensives, or certification programs.

- Links coaching insights to measurable performance outcomes, making it easier to demonstrate leadership improvement, productivity gains, or successful career transitions.

When to use this model?

- Coaches use this model when evaluating whether the coaching program structure itself supports consistent behavior change and measurable results.

- It is particularly useful for multi-week transformation programs where delivery systems directly influence client outcomes.

- The framework also helps when scaling coaching cohorts or certification programs that require standardized evaluation systems.

- It works well when linking coaching insights to long-term performance improvements such as leadership effectiveness or career advancement.

Also read: How Coaches Can Improve Coachability and Drive Better Client Results

6. Anderson’s Model

Many organizations launch coaching initiatives, assuming leadership capability is the root issue. In reality, some challenges stem from process gaps, unclear strategy, or structural misalignment. Anderson’s Model of Learning Evaluation addresses this by asking a more strategic question first: “Is coaching actually the right solution?”

Developed by David Anderson, this framework connects coaching initiatives directly to business strategy and organizational priorities. Instead of evaluating coaching after the program begins, it diagnoses whether coaching should be implemented at all. For executive coaches working with organizations, this model prevents misdirected interventions.

Framework breakdown:

- Strategic alignment assessment: The coach begins by reviewing organizational priorities, leadership performance metrics, and operational challenges. This step confirms whether coaching directly supports strategic objectives such as leadership capability, decision-making quality, or team performance.

- Intervention selection: Next, the coach evaluates whether coaching is the right intervention. Some challenges require leadership training, clearer workflows, or organizational restructuring instead.

- Business value estimation: Before launching the program, expected outcomes are defined. These may include leadership productivity improvements, stronger team engagement, or reduced management friction. Establishing these benchmarks early helps stakeholders justify the coaching investment.

- Results and strategic impact measurement

After the program concludes, outcomes are measured against the original objectives. This includes behavioral leadership improvements, operational performance gains, or measurable team effectiveness changes.

Why it works:

- Ensures coaching programs are launched only when they directly support organizational strategy and leadership priorities.

- Prevents organizations from using coaching to solve problems that actually stem from broken processes or unclear systems.

- Connects leadership development initiatives to measurable performance outcomes expected by senior stakeholders.

When to use this model?

- Use this model when working with organizations that want to ensure coaching directly supports leadership or business strategy.

- It works well when diagnosing whether a leadership challenge requires coaching, training, or operational redesign.

- Coaches apply it when aligning executive coaching programs with measurable business priorities such as performance, retention, or team effectiveness.

- It is especially valuable when stakeholders expect coaching investments to demonstrate clear organizational impact.

Also read: 3 Proven Strategies for Scaling Coaching in Large Organizations

7. CIPP Model

Some coaching programs appear productive on the surface. Clients attend sessions, discussions feel insightful, and feedback is positive, yet long-term change never materializes. The CIPP Model helps coaches diagnose why.

Developed by Daniel Stufflebeam, this framework evaluates the entire coaching lifecycle. It examines four stages: Context, Input, Process, and Product to reveal where results succeed or quietly break down.

For coaches running structured transformation programs, leadership cohorts, or multi-session engagements, this model acts like a diagnostic system. Instead of discovering problems after a program ends, it allows coaches to identify and correct friction points while the program is still running.

Framework breakdown:

- Context evaluation: The coach first clarifies the real problem the program must address, such as leadership capability gaps, stalled career progression, or team communication breakdowns.

- Input evaluation: Next, the coach reviews the frameworks, tools, and accountability systems supporting the program. This includes goal-tracking dashboards, reflection exercises, and structured action planning between sessions.

- Process monitoring: During delivery, the coach tracks how the program operates in practice. Indicators include session attendance, action-step follow-through, and participation in reflection exercises.

- Product evaluation: Finally, the coach measures the outcomes created by the program. These may include leadership performance improvements, career advancement milestones, or measurable habit adoption.

Why it works:

- Evaluates the full lifecycle of a coaching program instead of focusing only on final outcomes.

- Helps coaches detect engagement drops, weak accountability, or stalled implementation while the program is still running.

- Separates whether progress problems originate from program design flaws or client follow-through gaps.

- Supports continuous improvement by allowing program adjustments during active cohorts rather than after completion.

When to use this model?

- Use this model when running multi-month coaching programs where progress must be evaluated at multiple checkpoints.

- It is especially helpful when refining delivery during active cohorts instead of waiting until the program ends.

- Coaches apply it when diagnosing whether stalled progress comes from program design issues or weak client implementation.

- It works well for team coaching and leadership development programs where multiple participants influence outcomes.

Also read: Life Map for Coaches: How to Create One, Benefits, Techniques, and Templates

8. Kraiger’s Framework

Many clients leave coaching sessions with strong conceptual clarity, yet their real-world execution barely changes. Kraiger’s Three-Dimensional Framework helps coaches understand why. Developed by Kurt Kraiger, the model evaluates learning across three areas: knowledge, capability, and motivation.

Framework breakdown:

- Cognitive outcomes (understanding): This dimension evaluates how well clients genuinely understand the frameworks discussed during coaching, such as scenario walkthroughs, reflection exercises, and structured concept reviews.

- Skill-based outcomes (execution ability): Next, the coach evaluates if clients can actually perform the behaviors required by the framework. Examples include running structured feedback conversations, leading team planning sessions, or applying negotiation strategies.

- Affective outcomes (motivation & confidence): The final dimension evaluates confidence, emotional readiness, and willingness to implement change. Many clients understand a strategy but hesitate to apply it due to discomfort or perceived risk.

Why it works:

- Distinguishes whether clients lack conceptual understanding, execution capability, or motivation to apply new behaviors.

- Reveals why many leadership programs generate strong insights but fail to produce consistent implementation.

- Helps coaches design interventions that build both decision frameworks and practical execution skills.

- Highlights when stalled progress is caused by confidence barriers rather than knowledge gaps.

When to use this model?

- Use this model when clients understand frameworks intellectually but struggle to apply them consistently in real situations.

- It works well when coaching programs combine mindset transformation with leadership or performance skill development.

- Coaches apply it when diagnosing whether stalled progress comes from capability gaps or confidence barriers.

- It is valuable when refining behavioral coaching programs that aim to translate insights into consistent execution.

Also read: Behavioral Coaching: 4 Modalities to Transform Client Outcomes (2026)

9. Guskey’s Professional Development Evaluation

Many development programs stop at post-session satisfaction surveys. Participants report that the session was useful, the program ends, and no one checks whether anything actually changed afterward.

Thomas R. Guskey designed his framework to evaluate the full chain of impact, from participant experience to the measurable results created in the environment where those participants operate. Guskey’s model ensures evaluation connects coaching insights to real-world behavioral and organizational outcomes.

Framework breakdown:

- Participant reactions: This level captures how participants experience the coaching sessions, including engagement levels, perceived relevance, and clarity of insights.

- Participant learning: The coach evaluates whether participants actually understood the frameworks and concepts discussed during coaching. This may involve reflection prompts, scenario discussions, or structured learning reviews.

- Organizational support and environment: This stage examines whether the work environment supports the behaviors participants are expected to implement. Leadership expectations, company culture, and operational systems can either reinforce or block new actions.

- Application of knowledge and skills: At this stage, the focus shifts to actual behavioral implementation. Coaches track how consistently participants apply leadership practices, communication frameworks, or decision-making strategies in their daily work.

- Organizational results: The final level evaluates the downstream results created by those behavioral changes. In leadership coaching contexts, this may appear as improved team engagement, stronger collaboration, productivity gains, or higher performance outcomes.

Why it works:

- Moves evaluation beyond satisfaction surveys by connecting coaching insights to real behavioral change.

- Works particularly well for leadership coaching programs where manager behavior must influence team performance.

- Examines whether the surrounding organizational environment supports or blocks implementation.

- Links leadership development outcomes to measurable indicators such as team engagement, productivity, or collaboration quality.

When to use this model?

- Use this model when coaching programs operate within organizations where leadership behavior must influence team or business outcomes.

- It is especially useful when clients want evidence that coaching insights translate into measurable workplace improvements.

- Coaches apply it when evaluating whether organizational culture or leadership structures affect implementation.

- It works well for leadership development initiatives where participant learning must produce broader organizational impact.

Related: 8 Benefits of Executive Coaching & How It Can Help on an Organisational Level

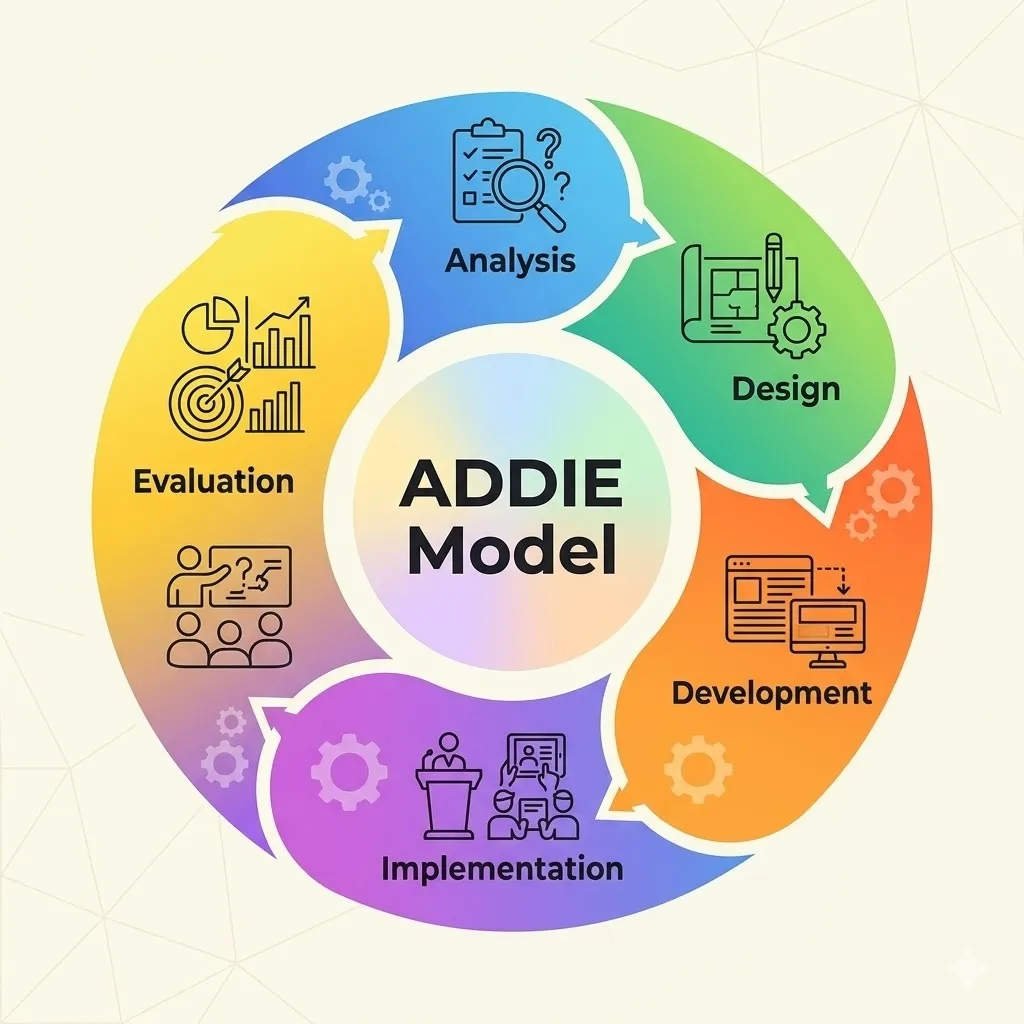

10. ADDIE Model

Many coaching programs progress organically. A single session turns into a short engagement, which gradually becomes a cohort or signature program. The ADDIE Model offers a systematic approach to designing learning programs from the ground up. Widely used in instructional design, it breaks program creation into five stages: Analysis, Design, Development, Implementation, and Evaluation.

Framework breakdown:

- Analysis: The coach first identifies the core client problem the program must solve, such as stalled career progression, leadership bottlenecks, or recurring decision-making challenges.

- Design: At this stage, the coach structures the program journey, including session themes, progress checkpoints, accountability routines, and outcome metrics.

- Development: The coach builds the assets required to run the program effectively. These may include session frameworks, reflection worksheets, goal-tracking dashboards, and structured coaching prompts.

- Implementation: The coaching program is delivered while monitoring session engagement, action-step follow-through, and early behavioral changes.

- Evaluation: Finally, the program’s performance is reviewed using engagement data, behavioral change indicators, and outcome metrics.

Why it works:

- Introduces a structured approach for building coaching programs instead of letting them grow informally.

- Clarifies the client problem, program structure, and expected outcomes before delivery begins.

- Supports scalable coaching programs by creating repeatable frameworks and delivery assets.

- Enables continuous program refinement by reviewing behavioral outcomes and engagement data after each cohort.

When to use this model?

- Use this model when building a structured signature coaching program instead of delivering ad-hoc sessions.

- It is particularly valuable when transitioning from one-on-one coaching to cohort-based programs.

- Coaches apply it when creating repeatable frameworks that reduce preparation time for each client engagement.

- It works well when scaling coaching delivery while maintaining consistent client outcomes.

Knowing the frameworks is useful, but their real value comes from the advantages they bring to coaching and development programs.

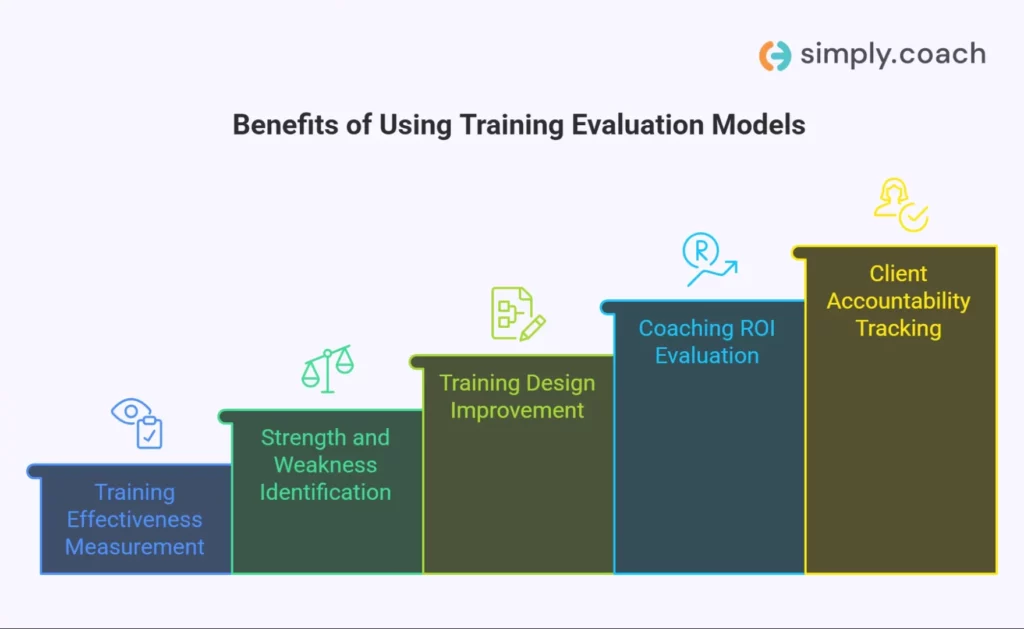

5 Benefits of Using Training Evaluation Models

When coaches manage multiple engagements or leadership cohorts, relying on intuition alone makes it difficult to track. That becomes a problem when clients ask a direct question: “Is this actually working?” Training evaluation modelssolve that by turning progress into observable data.

Here are some benefits.

1. Measure training effectiveness

Evaluation frameworks track whether coaching actually changes behavior. Metrics like goal completion rate, habit adherence, and follow-through between sessions show whether progress is happening outside the call.

2. Identify strengths and weaknesses

Patterns quickly emerge across clients. For example, strong engagement but weak implementation may indicate unclear action plans or insufficient accountability between sessions.

3. Enhance training design

Evaluation data highlights which exercises, frameworks, or session structures create the strongest client breakthroughs. Coaches can refine program flow and eliminate activities that produce little behavioral impact.

4. Improve ROI on training investments

Clients investing in leadership or career coaching want measurable results. Tracking outcome metrics, promotions, revenue growth, and improved team performance helps demonstrate the financial value of the coaching engagement.

5. Promote accountability

Structured evaluation creates clear expectations for both coach and client. Progress dashboards, automated check-ins, and weekly milestone tracking reduce stalled goals and improve follow-through.

For a real-world example, see How Sensiba Built a Scalable Coaching Culture with Simply.Coach, which shows how structured evaluation and feedback systems supported measurable growth across their coaching programs.

Despite these advantages, implementing evaluation frameworks consistently can present practical challenges.

3 Challenges in Implementing Training Evaluation Models

Despite their value, many coaches avoid formal evaluation systems. Not because they disagree with the concept, but because implementation feels time-consuming or overly technical.

Understanding these barriers helps coaches design practical systems that track outcomes without adding administrative overload.

1. Limited resources and time constraints

Many coaches manage evaluation manually across spreadsheets and notes. Without streamlined systems for progress tracking, gathering consistent data becomes difficult.

2. Stakeholder buy-in

In corporate coaching engagements, leaders may support coaching but resist structured measurement. Without agreement on evaluation metrics, proving program impact becomes harder.

3. Complexity of evaluation models

Some frameworks contain multiple levels and metrics that feel overwhelming. Coaches often benefit from simplifying the model to focus on a few meaningful performance indicators.

Addressing these challenges requires structured processes that make evaluation easier to apply in real coaching programs.

Also read: Building a Successful Coaching Program in 7 Key Steps

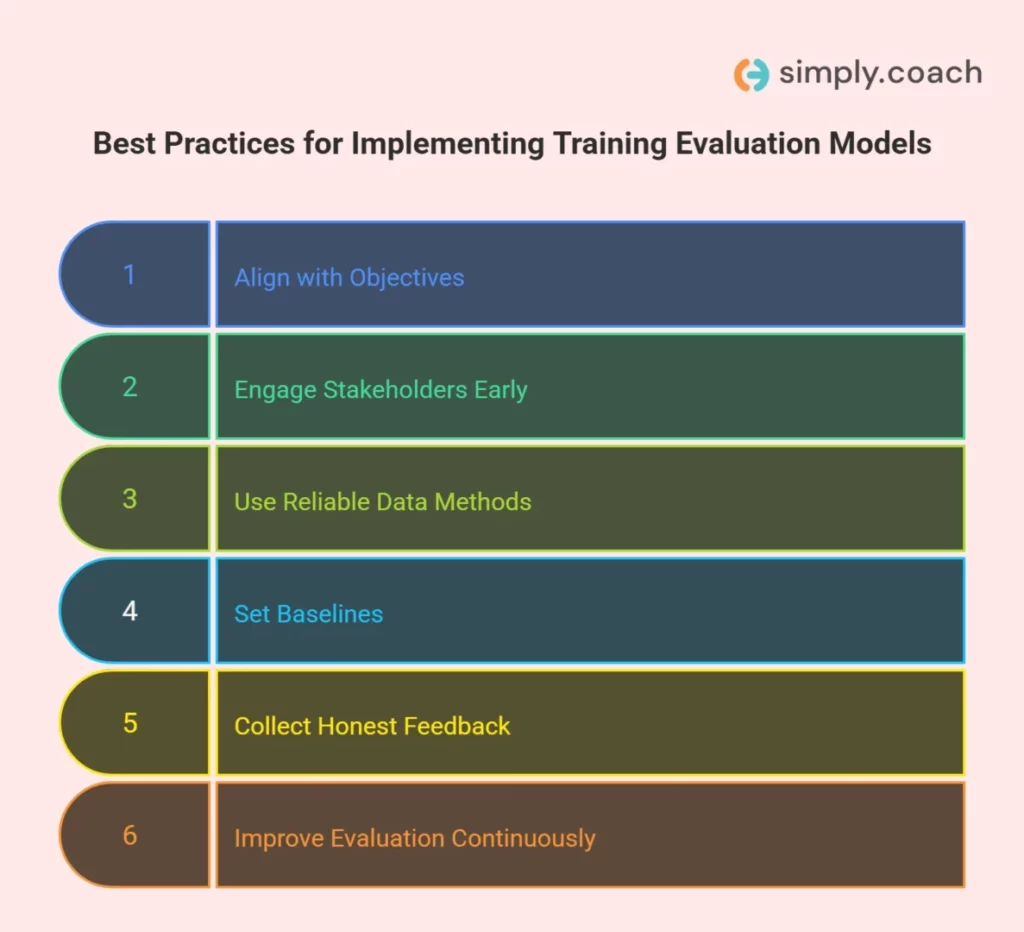

Mastering Training Evaluation Models – 6 Best Practices

Coaches do not need complex analytics to measure progress, just clear metrics that reflect real behavioral change.

These practices help integrate evaluation into coaching programs without adding unnecessary administrative work.

1. Align evaluation with training objectives

Evaluation becomes meaningless when metrics do not reflect the actual goal of the coaching program. Many programs default to easy indicators like session attendance or satisfaction scores, which reveal little about development.

Strong evaluation focuses on observable behaviors tied directly to the program objective, such as decision-making quality, conflict management, delegation patterns, or feedback frequency.

2. Involve stakeholders from the beginning

In leadership and organizational coaching, success is often defined by multiple parties, including the client, their manager, and HR sponsors.

If evaluation criteria are not aligned early, coaches may demonstrate progress that sponsors fail to recognize. Early agreement on behavioral indicators, reporting expectations, and success definitions prevents evaluation disputes later.

3. Develop reliable data collection methods

Evaluation frequently breaks down because data collection is inconsistent or dependent on memory. Informal notes and sporadic reflections make it difficult to track real patterns. Structured systems such as regular progress check-ins, stakeholder feedback loops, and documented behavioral observations create a consistent record of change over time.

4. Set baseline measures

One of the most common evaluation mistakes is starting measurement after coaching has already begun. Without a clear baseline, improvements become difficult to verify and often rely on subjective perception.

Effective baselines combine self-assessment, stakeholder input, and observable behavioral indicators so progress reflects real change rather than optimistic self-reporting.

5. Encourage honest feedback

Clients and participants often hesitate to critique coaching programs directly, especially in executive or organizational settings. This can lead to overly positive feedback that hides real challenges.

Structured reflection prompts, anonymous feedback channels, and periodic progress reviews help surface friction points, resistance patterns, and barriers to behavior change that would otherwise remain unspoken.

6. Continuously improve the evaluation process

Evaluation frameworks should evolve with each coaching engagement or cohort. Programs that rigidly follow a static model often accumulate irrelevant metrics or outdated assumptions.

Reviewing evaluation data after each program helps identify which indicators actually predicted behavior change and which created unnecessary complexity, allowing coaches to refine their evaluation approach over time.

Applying these practices helps coaches turn evaluation from a reporting exercise into a system that continuously strengthens client outcomes and program impact.

Also read: Top 14 Coaching Models Every Professional Coach Should Master

Turn Every Training Evaluation Session into Quantifiable Client Progress Using Simply.Coach

As a coach, you know the frustration: you run a leadership workshop or team training, but weeks later, it’s hard to prove real change. Did your clients adopt the skills? Did team dynamics actually improve? With Simply.Coach, you can track every participant’s progress, measure skill adoption, and see tangible ROI without drowning in spreadsheets or chasing feedback. From executive programs to multi-client cohorts, the platform makes training evaluation structured, actionable, and completely client-focused.

Here’s how it benefits you:

- Goal & development planning: Define clear evaluation metrics for each training module. Automated progress check-ins ensure clients and teams are accountable, and outcomes are measurable.

- Forms for coaches: Capture pre- and post-training assessments, self-reflection exercises, and competency checklists digitally. Automated collection and reminders increase response rates, making evaluation data reliable, accurate, and actionable for behavioral or performance KPIs.

- Action plans & nudges: Assign evaluation-related tasks, such as practicing newly learned skills or completing reflective exercises. Automated nudges ensure consistent participation, enhancing skill retention and tracking measurable progress across participants.

- Reports & stakeholder integration: Generate comprehensive training evaluation reports with client and stakeholder feedback, showing KPIs like performance improvement, engagement levels, and behavior change.

- Client workspaces: Provide participants with a central hub to track their development, complete exercises, and review session notes. This visibility increases engagement, encourages self-assessment, and supports measurable progress in alignment with training objectives.

- Scheduling & embedded video conferencing: Run evaluation sessions, follow-ups, or team debriefs seamlessly. Automated scheduling reduces no-shows and ensures every evaluation touchpoint is captured, boosting participation KPIs and maintaining program fidelity.

Simply.Coach lets you turn your training evaluation programs into data-driven and scalable coaching initiatives, giving you clarity on client outcomes, behavioral improvements, and ROI, all while freeing you to focus on enhancing skills and facilitating lasting change.

Conclusion

Most coaching improvements happen in subtle ways: better decisions, stronger habits, and clearer leadership. Training evaluation models help you capture those changes instead of relying on gut feeling. Frameworks like Kirkpatrick, Phillips ROI, CIRO, and Kraiger give you ways to track what’s actually shifting for clients: goals getting completed, behaviors sticking, and outcomes improving over time.

Knowing the frameworks isn’t usually the hard part. The real challenge shows up during a busy week of back-to-back sessions, when you’re also writing notes, following up with clients, and preparing progress reports. When everything lives in different places, measuring real coaching outcomes starts to feel like just another task competing for your attention.

That’s where platforms like Simply.Coach with their all-in-one coaching management software helps turn evaluation frameworks into part of your everyday workflow. With goal & development planning, progress check-ins, and action plans, you can monitor measurable client progress between sessions. Features like reports and stakeholder feedback make it much easier to demonstrate real coaching impact to clients and sponsors.

FAQs

1. What are training evaluation models?

Training evaluation models are structured frameworks used to measure how effective a training or coaching program is. They assess factors like participant engagement, learning retention, behavior change, and real-world outcomes.

2. What are the most common training evaluation models?

Some of the most widely used training evaluation models include:

- Kirkpatrick Model

- Phillips ROI Model

- CIRO Model

- Brinkerhoff Success Case Method

- Kaufman’s Model of Learning Evaluation

- Kraiger’s Three-Dimensional Framework

- CIPP Model

Each framework measures different aspects of program effectiveness, from participant feedback to business impact.

3. Why are training evaluation models important?

Evaluation models help coaches and organizations determine whether training actually improves performance. Without structured evaluation, it becomes difficult to prove whether coaching sessions create real behavioral change.

4. What is the Kirkpatrick Model of training evaluation?

The Kirkpatrick Model evaluates training effectiveness across four levels: Reaction, Learning, Behavior, and Results. It begins by measuring participant satisfaction and progresses toward tracking real-world outcomes such as performance improvement or organizational impact.

5. How do you measure training effectiveness?

Training effectiveness is typically measured using a combination of qualitative and quantitative metrics. Common indicators include participant feedback, knowledge retention, behavioral implementation, goal completion rates, and productivity improvements.

6. What is the Phillips ROI Model?

The Phillips ROI Model expands traditional evaluation frameworks by calculating the financial return generated by training programs. It measures five levels: reaction, learning, application, business impact, and return on investment, helping organizations quantify the economic value of training initiatives.

7. What challenges do organizations face when implementing training evaluation models?

Common challenges include limited time for data collection, lack of stakeholder alignment on success metrics, and overly complex evaluation frameworks.

Many organizations also struggle with fragmented data systems, making it difficult to track behavioral changes and long-term outcomes consistently.

8. Which training evaluation model is best?

There is no single best model. The right framework depends on the goals of the program. For example, the Kirkpatrick Model works well for general evaluation, while the Phillips Model is better suited for measuring financial impact and ROI.

About Simply.Coach

Simply.Coach is an enterprise-grade coaching software designed to be used by individual coaches and coaching businesses. Trusted by ICF-accredited and EMCC-credentialed coaches worldwide, Simply.Coach is on a mission to elevate the experience and process of coaching with technology-led tools and solutions.